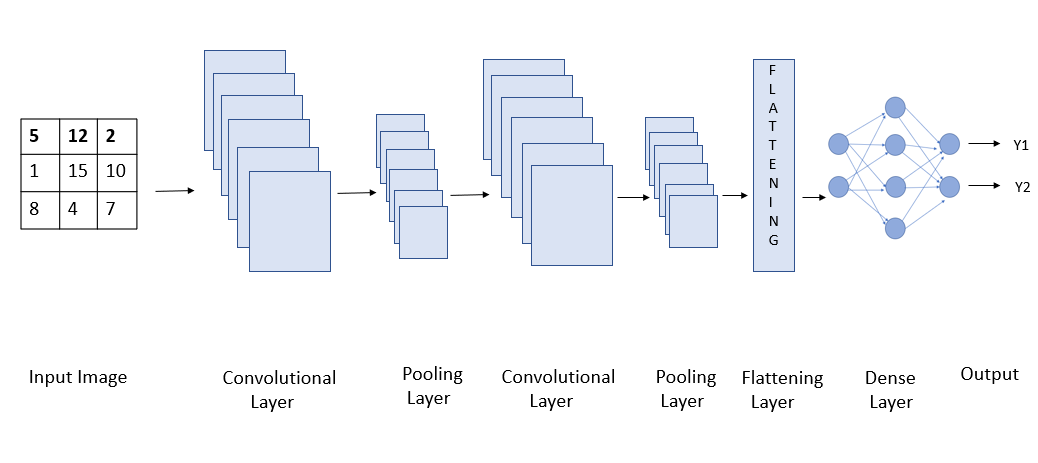

Deep Learning with CIFAR-10. Neural Networks are the programmable… | by Aarya Brahmane | Towards Data Science

How to convert to bits / dim for VQ-VAE CIFAR-10 experiments ? · Issue #131 · deepmind/sonnet · GitHub

Bits per pixel for models (lower is better) using logit transforms on... | Download Scientific Diagram

OpenAI Sparse Transformer Improves Predictable Sequence Length by 30x | by Synced | SyncedReview | Medium

![PDF] Invertible Residual Networks | Semantic Scholar PDF] Invertible Residual Networks | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/806bb0d6688ce0d93fbe34112922060506acbf6c/7-Figure9-1.png)